Message Driven Microservices & Monoliths with Micronaut - Part 2: Consuming Messages

Posted By: Todd Sharp on 1/25/2021 12:00 GMT

Tagged: Cloud, Developers, Java

In our last post, we talked about why messaging is important in our modern applications. We set up a local Kafka broker and created a basic e-commerce microservice that handled orders and published a message to our Kafka broker when new orders are received. This example illustrates the importance of reliable messaging between microservices because it shows a real-life example of the need for two services to communicate with each other in a decoupled and fail-safe manner.

But that was only half of the story. Producing messages and consuming them from a simple terminal window is cool, but to further illustrate the example we can take it a step further and create a basic “shipping” service that consumes the messages published from our order service, “ships” the order and then notifies the order service of the updated shipping status.

Create a Shipping Topic

Before we create the shipping service, first add another topic to our Kafka broker. We can do this the same exact way that we created the order topic by using the script located in the Kafka bin directory.

That’s all of the pre-work that we need to do for our shipping service.

Create the Shipping Service

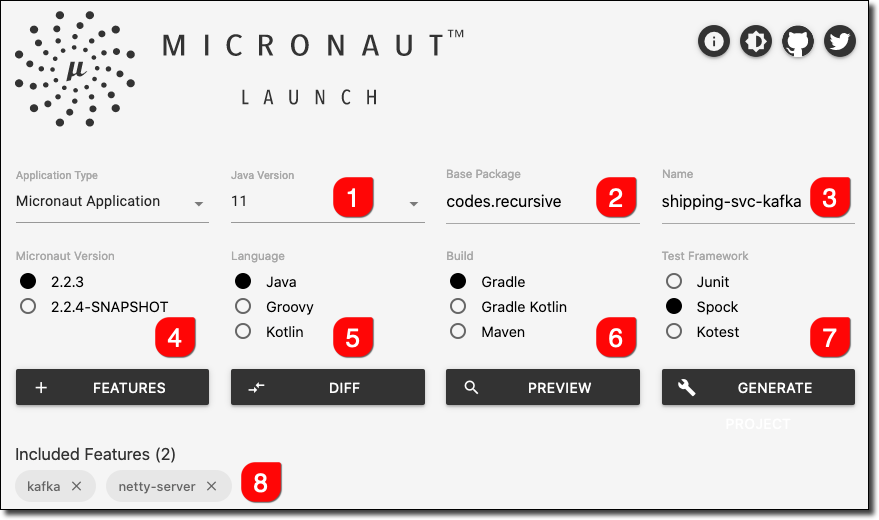

Now we’ll bootstrap the shipping service using Micronaut Launch just like we did our order service. Choose Java 11, Gradle and add the Netty Server and Kafka features again.

Download it, unzip it and open it with your favorite IDE.

Our config will be handled like it was in the order service, so create a file at resources called application-local.yml. This time, set the shipping service to run on port 8081 to prevent any conflicts with the order service.

Let’s create a Shipment domain object to represent the order shipment with the properties id, orderId and a shippedOn date.

For model consistency, add the Shipment object as shown above to the order-svc-kafka project and bring the Order and ShipmentStatus model objects into this shipment-svc-kafka project as well. We’ll want to keep our domain objects in sync and even though this presents a bit of redundant code it is necessary. In reality, you may want to create a separate Java project to manage your shared model objects and import that into each project, but that’s an architecture decision that seems to be somewhat controversial with developers so I’ll leave the implementation up to you and your team’s best practices. Let me remind you what they look like here for the sake of this demo.

Now we’ll need a ShippingService. Again, instead of properly persisting the shipments to a database backend I will be using a synchronized List to store them in memory so that it’s thread-safe. Using a synchronized list isn’t the most performant solution since it locks the List on access, but since this is just for mock persistence purposes in lieu of a real backend and it serves the purpose of keeping the faux database thread-safe, we’ll go with it.

As you can see above, we’re just storing each Shipment in the List and have a few methods for some CRUD operations. Next, create a ShippingController with a single method - getRecentShipments(). We won’t really need to expose many endpoints here because the shipping service handles most operations “behind the scenes”.

Startup the shipping service with:

And check for recent shipments (which will of course be empty at this point).

Consuming Order Messages

Now we can move on to the fun part - consuming orders! Again, the Micronaut CLI makes it easy to create a consumer.

This creates an empty listener for us.

Note that the @KafkaListener annotation allows us to specify the offset at which we want to read. The choices here are EARLIEST and LATEST. You can choose whichever is most appropriate for your application. Now let’s populate the listener by injecting our ShippingService and adding a receive() method that will output a message into our console log each time an order is received and ship the order via the ShippingService.

Re-run the shipping service (and make sure any console consumers are stopped) and place a new “order” with the order service.

Observe the shipping service console to see it log the new order when it is received.

To keep an eye on recent shipments, check the proper endpoint.

The Beauty of Messaging

So this is pretty amazing stuff, I know. Our microservices are reliably communicating with each other in a very decoupled and reliable manner. To illustrate the resiliency of our services so far, go ahead and stop the shipping service. Yep, just stop it. Now, place a few orders in the order service. Then wait. Go get some coffee or tea - and come back in a few minutes and re-start the shipping service. What happens? You got it - the shipping service picks up right where it left off and ships the orders even though it’s been a few minutes since it was online.

Notifying the Order Service of New Shipments

The shipping service can now handle incoming orders and ship them as needed, but wouldn’t we also want to update the order status in the order service so that we can provide the proper feedback the next time an order is retrieved? Of course! To do this, we can add a new ShipmentProducer in the shipping microservice. We do this the same way we created the producer in the last post, with the Micronaut CLI.

Populate the ShipmentProducer, annotating the sendMessage method with the new shipping-topic and using our Shipment object as the message type this time.

Next, alter the ShippingService to send a shipment message when the order is shipped. First, inject the new ShipmentProducer:

Next, modify the newShipment method to send the message.

Now we can head back to our order-svc-kafka project and create a consumer that will receive the updated shipping status.

Modify the ShipmentConsumer so that the order status is updated when the shipping message is received.

Now place another new order.

Immediately check the status of the new order:

Observe the shipping console:

Now we can check the order service status once again and observe that the shipment status has been updated to SHIPPED!

Summary

In this post, we added a shipment microservice that listened for new orders placed with the order microservice, shipped the orders and notified the order service of the updated shipment status. As you can see, communicating with message brokers is not difficult. Messaging allows us to keep our services lean, focused and responsive while being tolerant to network partitions or system failure. I’d call that a win in my book! Stay tuned for the next post where we’ll look at hosted alternatives to Kafka to make life even easier!

Check out the code used in this post on GitHub at: https://github.com/recursivecodes/shipping-svc-kafka.

Image by Quang Nguyen vinh from Pixabay

Related Posts

Querying Autonomous Database from an Oracle Function (The Quick, Easy & Completely Secure Way)

I've written many blog posts about connecting to an Autonomous DB instance in the past. Best practices evolve as tools, services, and frameworks become...

Sending Email With OCI Email Delivery From Micronaut

Email delivery is a critical function of most web applications in the world today. I've managed an email server in the past - and trust me - it's not fun...

Brain to the Cloud - Part III - Examining the Relationship Between Brain Activity and Video Game Performance

In my last post, we looked at the technical aspects of my Brain to the Cloud project including much of the code that was used to collect and analyze the...