Message Driven Microservices & Monoliths with Micronaut - Part 1: Installing Kafka & Sending Your First Message

Posted By: Todd Sharp on 1/19/2021 5:00 GMT

Tagged: Cloud, Developers, Java

One of the biggest challenges that developers face when working in a microservice environment is ensuring data consistency. Distributed services must be able to communicate with each other in order for your application to deliver all of the requirements and functionality that your users expect. Think about an e-commerce application, for example. Your shipment service needs to know when an order is placed, right? Your order service, in turn, should also know when it has been shipped! Even if your application is less “pure microservice” and more of a hybrid approach you probably have at least a few distributed services that ultimately need to communicate with each other. Communication between distributed services is challenging and I want to show you a few tricks for simplifying this difficult task by using Micronaut and some fairly popular messaging solutions. We’ll break this down in the next few posts to hopefully help you to implement messaging in your applications using some popular open source tools as well as an Oracle Cloud based option that can be plugged into your service with very little setup and configuration.

You may be asking yourself why this approach is even necessary. The obvious and traditional approach would be to have your services communicate with each other with HTTP via REST endpoints, but that’s asking for trouble. What happens if one (or more) of your services are down? That could mean that an incoming order to your application never gets shipped, and that’s not good for business! A better option would be to use a messaging queue to pass system events and messages between your services. In this post, we will get started by setting up Kafka to use as our message broker and getting our initial services set up to talk to the Kafka broker.

I’m going to focus on Micronaut in this series because it solves the problem of messaging in your Java services with built-in support for Kafka (as well as MQTT which we’ll look at later on in this series). We’ll walk through the steps together, but if you want to read more about the built-in support for Kafka in Micronaut, bookmark and refer to the docs. In fact, I’ve covered some of the technical details on how to connect Micronaut to an Oracle Cloud Streaming Service endpoint in a previous blog post, but in this series, we’re going to discuss more of the “why” along with the “how” to hopefully give the concept more context and demonstrate some use cases.

Installing Kafka Locally

The first thing we’ll do here is to download Apache Kafka so that we can use it as our message broker locally. I’m quite sure you’ve heard of Kafka - it’s massively popular and in use at many companies today. That said, my experience has shown me that no matter how popular a library, tool, or service is there is often a good portion of developers who are still unfamiliar or uncomfortable with said tool or service. If that’s you, no worries - let's quickly discuss. Kafka is an open source streaming tool that lets you produce (sometimes called publish) key/value based messages to a queue and later on consume (or subscribe to) the queue in the order in which they were published. There is much more to the Kafka ecosystem, but if you’re new to working with it then you now have enough basic knowledge to move forward with this series.

Let's install Kafka. Most of these instructions are found on the Kafka Quickstart, but I’ll post them here to save you a click. You’ll need to first download the latest binary version of Kafka (which as of the time of this blog post is currently 2.7.0).

Next, unzip it and switch to the directory where it was unzipped.

Kafka depends on a service called ZooKeeper (for now, but this will go away in the future). Start ZooKeeper in a console window/tab like so:

Now we can start the Kafka Broker Service itself. In another console/tab, run:

Cool, easy. Now that Zookeeper and the Kafka Broker are running (the broker runs on `localhost:9092`), open a third console window/tab. We’ll now create a few “topics” in our running broker that we’ll use later on from our Micronaut service. Since we’re going with an “e-commerce” example here, create an “Order” topic by using the `kafka-topics.sh` script in the `bin` directory.

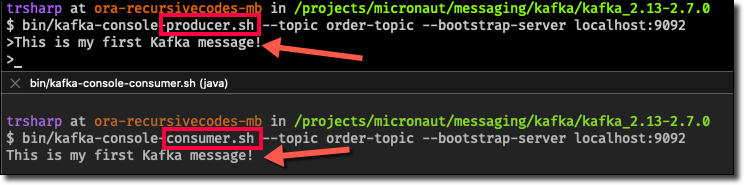

Kafka includes a few utility scripts that we can use to test producing and consuming to the brand new topics. If you want to test out the new topics, a new console window and start a producer.

You’ll notice that the producer script gives you a prompt that can be used to enter your message. Before you try it out, open one more console window/tab and start a consumer to listen to the topic:

The consumer script runs and patiently waits for an incoming message. Jump back to your producer console and type a message - anything you’d like.

We’ve got our broker up and running and we’ve published a message to confirm that our topic works as expected. Now let’s move on to creating our “Order” service with Micronaut.

Creating the Order Service

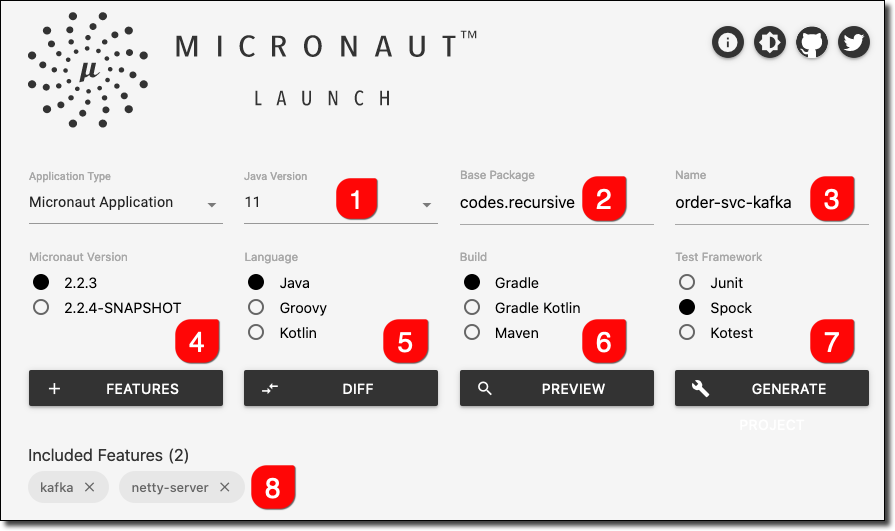

For our e-commerce example, we’ll need to first create our Micronaut app. The easiest way to do this is to use Micronaut Launch. We’ll use Java 11, Gradle, and add Kafka and Netty Server.

Once the bootstrapped application has been downloaded, we can unzip it and open it up in our favorite editor.

Order Service Config

Let's set up a config file for the app that will be used when we are connecting up to our local Kafka broker. Create a new file in resources/ called application-local.yml and populate it as such:

To make sure that this configuration is used when we launch the application, pass in the following system property when launching the app: -Dmicronaut.environments=local.

Creating a Domain, Service, and Controller

Our order microservice will need a domain object to represent our e-commerce orders, a service to mock persistence, and a controller to handle incoming requests. First, create a class to represent the Order in a package called domain and add 4 properties: id, customerId, totalCost and shipmentStatus. Add a constructor and getters/setters as you would any normal domain entity. Of course, this object is greatly simplified, but I think it properly illustrates the point without needlessly complicating this example.

Add a simple enum for the ShipmentStatus:

Next, let's create an OrderService to simulate a proper persistence tier. Certainly, Micronaut Data would be a logical choice for a true persistence tier, but again I’d like to keep this service focused on the messaging aspect so I will forgo that aspect for this demo and just use a simple List to store orders in memory whilst the service is running.

And now we’ll add a controller that will give our service some HTTP endpoints for our standard CRUD operations. The OrderController will have methods for listing orders, getting a single order, creating a new order, and updating an existing order. Nothing fancy, just a way to invoke our OrderService methods.

The basic order microservice is now ready for action. We can start it up with:

Testing the Order Service

We could certainly write some unit tests to confirm that our Order Service is working as expected (and I strongly encourage unit tests), but I find the visual impact of invoking the endpoints via cURL to be more impactful for blog posts. We’ll hit a few endpoints in our terminal to make sure things are working as expected.

First, let's add a few orders. Note that the ShipmentStatus will default to PENDING, so there is no need to pass that in when creating a new order.

We can now list all orders with a GET request to /order.

Or get a single order by passing in the order id.

Publishing Orders

We’ve set up Kafka and created a basic order service so far. In the next step, we’ll publish our orders to the order-topic that we set up earlier. Since we chose the ‘Kafka’ feature above when we bootstrapped the application via Micronaut Launch, all of the dependencies that we need are already set up in our project. We’ll need to update our configuration file to tell Micronaut where our Kafka broker is running, so open up resources/application-local.yml and add the broker config info. When it’s complete, the whole config file should look like so:

Now we can use the Micronaut CLI to add an OrderProducer with the following command.

Which will create a basic producer that looks like this:

We just need to create a single message signature for sendMessage and annotate it with @Topic to point it at our order-topic.

Now we can inject our OrderProducer into our OrderService.

And modify the newOrder method in OrderService to use the OrderProducer to publish the order as a message to the topic. Note that the order will be serialized as JSON and the order JSON will be the body of the message. We’re using a random UUID as the message key, but you can use whatever unique identifier you’d like.

Before we can test this, make sure that you have the simple Kafka consumer still running in a terminal window (or start a new consumer if you’ve already closed it from earlier) on the order-topic.

Now add a new order to the system via cURL:

And observe the consumer console which will receive the message for the new order!

Summary

We covered quite a bit in this post, but hopefully you found it easy to follow and helpful. We set up a local Kafka broker, created a basic e-commerce service for handling orders and published the incoming orders to our Kafka broker. In the next post, we’ll add a shipment service that will consume the order messages, ship the orders and publish a shipment confirmation back to the order service for updating the order status for our users. If you have any questions, please leave a comment below or contact me via Twitter.

Check out the code used in this post on GitHub at https://github.com/recursivecodes/order-svc-kafka.

Photo by Anne Nygård on Unsplash

Related Posts

Querying Autonomous Database from an Oracle Function (The Quick, Easy & Completely Secure Way)

I've written many blog posts about connecting to an Autonomous DB instance in the past. Best practices evolve as tools, services, and frameworks become...

Sending Email With OCI Email Delivery From Micronaut

Email delivery is a critical function of most web applications in the world today. I've managed an email server in the past - and trust me - it's not fun...

Brain to the Cloud - Part III - Examining the Relationship Between Brain Activity and Video Game Performance

In my last post, we looked at the technical aspects of my Brain to the Cloud project including much of the code that was used to collect and analyze the...