Oracle Functions - Connecting To An ATP Database

Posted By: Todd Sharp on 8/1/2019 8:00 GMT

Tagged: Cloud, Developers, Java

.

Attention!!! This process in the blog post below has been superseded by the information contained in a newer blog post. If you are trying to connect your Oracle Function to an Autonomous DB instance, please read that post instead! This post will be left online for archival purposes.

So you've created, deployed and tested your first serverless function on Oracle Functions. It works great and you're ready to take the next step beyond "Hello, World" to actually create a useful function that can be used in your microservice architecture. In this post we'll take a look at how to connect your serverless function to an Autonomous Transaction Processing (ATP) instance and query the database for some data.

Before I get started, let me address the fact that querying a database is not something that is typically recommended when it comes to serverless functions. By their nature, serverless functions should be lean and not include many dependencies and external libraries. The "cold start" delay is a real thing and every ounce of code or external connections to your function add to that delay. Ideally your function would call another service in your API to retrieve or manipulate data via HTTP calls, but, let's be honest - there is always an edge case. And in those edge cases, you'll need to understand how to connect up to ATP from within your function so that is what we'll take a look at here today.

To give you a little background if you're brand new to Oracle Functions (which is based entirely on the Fn Project), Oracle Functions are "container-native". This means that each function is a completely self-contained Docker image that is stored in your OCIR Docker Registry and pulled, deployed and invoked when you invoke your function. If the latest version of the container is already deployed and running then there is virtually no delay when the function is invoked (this is known as a "warm start"). All serverless platforms face this issue - this is not something unique to Oracle Functions, rather it's a simple fact and it is the reason why serverless functions don't cost you a dime when they are not in use.

With that out of the way, let's create a Java function that connects up to an ATP Serverless instance and does some simple queries against a database table.

Note: The instructions below will allow you to connect to both "Serverless" and "Dedicated" Autonomous Transaction Processing instances. If you're using these instructions to connect to a Dedicated ATP instance you will need to ensure that your function is created with a subnet that can access the private subnet that your dedicated instance resides in (you will need to create an ingress rule to allow this communication on port 1521 for TCP). Refer to the documentation for further information.

When using Fn to create a Java function, you can typically rely on the boilerplate Docker image. But in our case, we'll need to add some external dependencies to the container so we'll need to do things a bit differently. Let's get started by creating an application. Applications are simply groups of functions that allow you to utilize shared configurations and compartmentalize your functions under a common group. To create an application, run the following command with the Fn CLI:

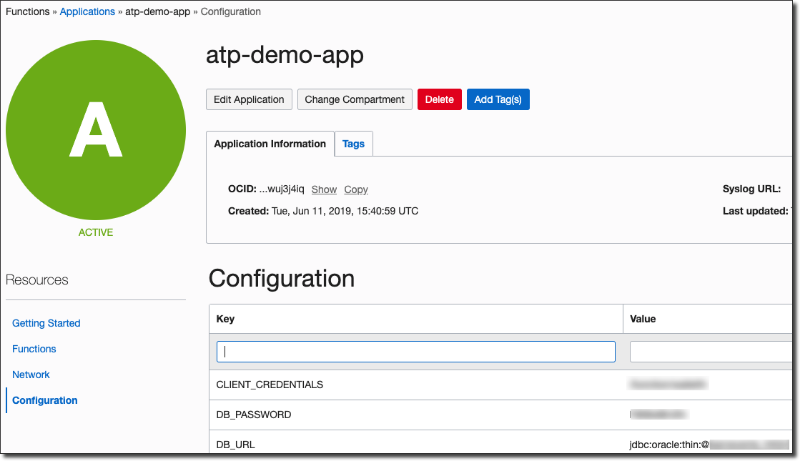

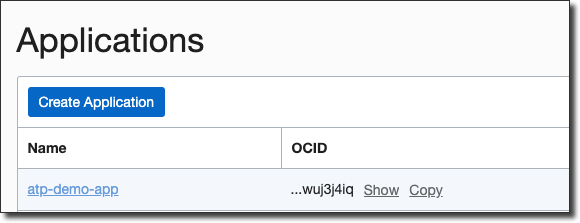

You can confirm that the application was created via the console UI:

Next, let's create the function itself. To do this, we'll take advantage of a feature in Fn that allows you to create your function from an "init-image". This allows us to modify the Dockerfile a bit to make sure that our ATP wallet contents end up inside of the Docker image that we produce for our function. Cloneout the JDK init image into a local directory and then run the following commands to get our function created:

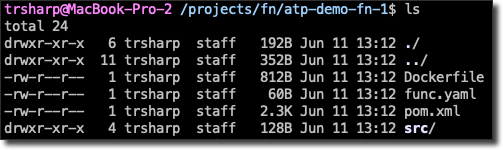

Take a look inside the directory that was created with this function and you'll see that some files have been generated for us:

Notice that the init image has created a Dockerfile for us which we'll ultimately use to make sure our wallet gets into the function image. Before we do that we need to download our ATP wallet that contains the necessary credentials that we will need to connect to ATP. There are several ways to do this, but the easiest is to use the OCI CLI like so:

Make sure to use the correct OCID for your ATP instance and set a strong wallet password with the CLI command. I like to keep mine in a build-resource directory that is ignored from source control. Unzip the wallet and let's take a look at the Dockerfile that was generated for us. If you haven't worked much with Docker you really shouldn't be too concerned as the syntax here is easy to understand and the commands we need to add are very minimal. It should be pretty obvious what is going on:

This Dockerfile uses a build container to perform the Maven build step, then creates our function container based on a slim JDK image, copies the generated JAR file into the function container and sets an entrypoint that is used to invoke the function. Before we go any further, let's deploy our function and test it out to make sure we're on the right track:

Now we can try invoking it. First take a look at the HelloFunction.java file that the init-image created for us:

The function is expecting us to pass in a single string representing a day of the week, so invoke the function and pass one in like so:

Which gave us the expected result. Great, our function is deployed and can be invoked! Now let's get down to adding our wallet credentials to the image. Modify the Dockerfile like so (note the addition on line 17):

Next, create some config variables for your application that will contain the necessary credentials for the database connection:

Note: You should always encrypt any configuration variables that contain sensitive information. Check my guide to using Key Management in OCI to learn how!

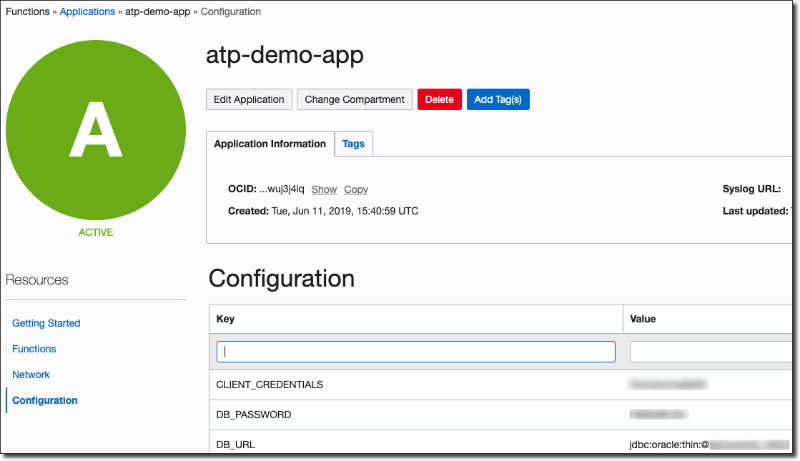

Verify the configuration was set via the console UI:

Configuration variables will now be available via environment variables matching the config key within each function. Next, modify the HandleFunction.java method as follows to grab the values from the environment and simply test serializing a simple Map and returning it:

Deploy, and test again and you should see the DB_USER variable sent back within a JSON object:

Awesome, now we have everything we need to talk to the ATP instance right inside our function. Assuming we have a table in our database with the following structure:

We need to make sure that we have the OJDBC dependencies within the Docker image. Modify the pom.xml as follows:

Now modify the Dockerfile to make sure we are copying the dependencies into the build container and installing them locally so they will be properly resolved (note lines 6-11):

We can now query the database like so:

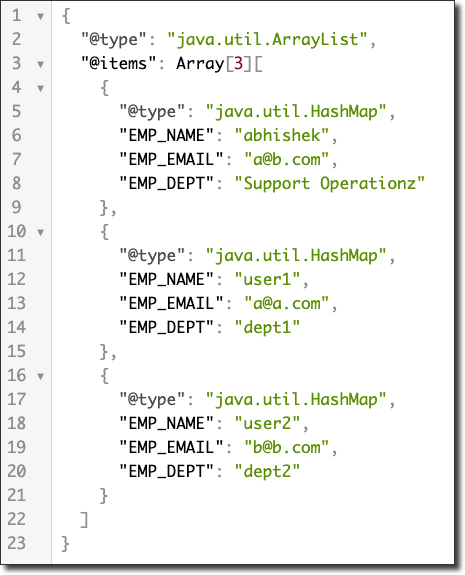

And now when we invoke the function, we'll see a nice JSON array of employees:

Of course, we can also take advantage of passing data into the function. Take another function in this application that defines an Employee POJO like so:

We can persist new employees by slightly modifying the previous function:

To invoke the insert function we pass a JSON object with the keys corresponding to the Employee POJO:

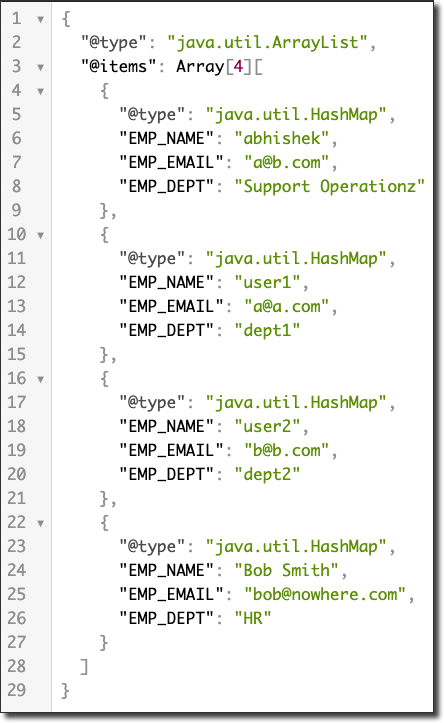

To confirm the insert, re-invoke the original function and notice the new employee:

In my next post, we'll take a look at connecting up to ATP with a NodeJS based function.

Attention!!! This process in the blog post below has been superseded by the information contained in a newer blog post. If you are trying to connect your Oracle Function to an Autonomous DB instance, please read that post instead!

Related Posts

Querying Autonomous Database from an Oracle Function (The Quick, Easy & Completely Secure Way)

I've written many blog posts about connecting to an Autonomous DB instance in the past. Best practices evolve as tools, services, and frameworks become...

Sending Email With OCI Email Delivery From Micronaut

Email delivery is a critical function of most web applications in the world today. I've managed an email server in the past - and trust me - it's not fun...

Brain to the Cloud - Part III - Examining the Relationship Between Brain Activity and Video Game Performance

In my last post, we looked at the technical aspects of my Brain to the Cloud project including much of the code that was used to collect and analyze the...