Oracle Functions: An Easier Way To Talk To Your Autonomous Database

Posted By: Todd Sharp on 8/6/2019 12:00 GMT

Tagged: Cloud, Containers, Microservices, APIs, Developers, Java

Last week I posted about connecting your Oracle Functions to your Autonomous DB instance to query and persist data. That post involved creating a custom Dockerfile and ensuring that your ATP wallet was included in the Docker image that is used to deploy and invoke your serverless function and there are certainly times where that may be necessary, but there's an easier way to talk to your Autonomous DB and we'll look at that method in this post.

If you're new to Autonomous DB, check out my Complete Guide To Getting Up And Running With Autonomous DB In The Cloud

In this method we'll utilize Oracle REST Data Services (ORDS) to get some data out of a simple user table in my ATP instance. I covered ORDS in great detail in my microservices series, but if you missed those posts we'll take another look at how to enable ORDS and then create a simple serverless function to call ORDS and retrieve some data.

Enabling ORDS

The first step is to REST enable the schema in which we're going to be working with. That can be accomplished with the following query, so open up your favorite query editor, CLI tool or SQL Developer Web and run the following:

Note: The argument p_auto_rest_auth being set to TRUE will protect your /metadata-catalog endpoint from being exposed.

Let's create the necessary privileges so that we'll be able to generate an auth token for our calls:

Create an OAUTH client associated with the privilege:

Grant the SQL Developer role to the client application:

We can now grab the client_id and client_secret with:

The client_id and client_secret can be used to generate an auth token for REST calls (we'll look at this in a bit). At this point, our schema is REST enabled and we have everything set up to authenticate our calls, but we haven't actually exposed any tables yet. We could certainly Auto-REST enable an entire table which would give us a full set of REST endpoints for CRUD on the table:

But in this example, let's just create a single endpoint to retrieve a single user by username:

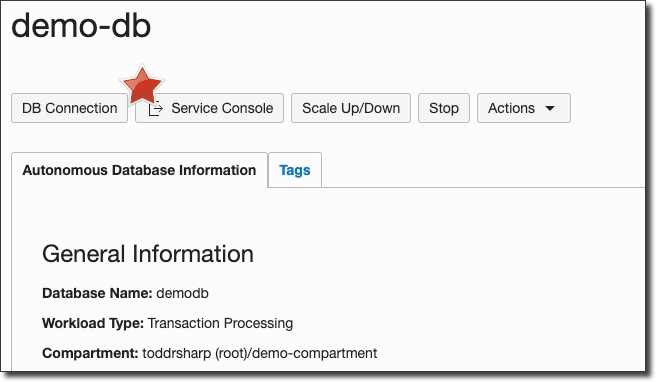

Right, so now we can set up our Oracle Function to hit this REST endpoint and retrieve a user. We'll need our ORDS base URL, so log in to your Oracle Cloud dashboard and view the details of your ATP instance. On the details page, click 'Service Console':

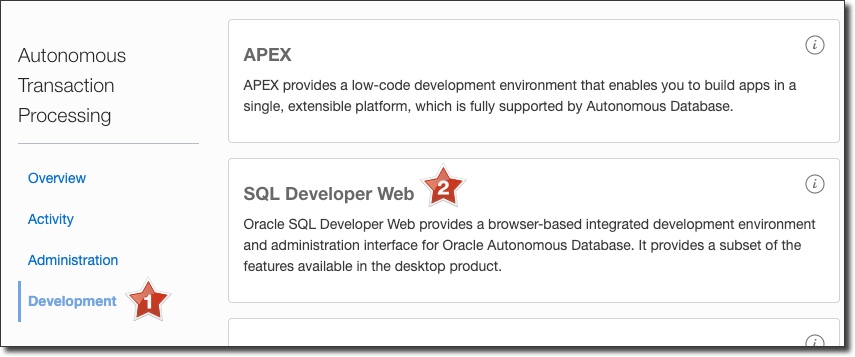

In the Service Console, click 'Development' and then 'SQL Developer Web':

Your SQL Developer Web url should look something like this:

https://[random chars]-demodb.adb.us-phoenix-1.oraclecloudapps.com/ords/admin/_sdw/?nav=worksheet

From here we can grab out ORDS base URL, so copy everything before /admin:

https://[random chars]-demodb.adb.us-phoenix-1.oraclecloudapps.com/ords/

This is the base URL for our ORDS services.

Creating A Serverless Function

If you're familiar with Oracle Functions, feel free to skip this step. If you're new to Oracle Functions, the first thing we'll need to do is create an 'application' to contain our functions:

You'll need to pass in a valid subnet ID. I typically create a VCN for all my serverless functions and choose one of the subnets within that VCN. If necessary, check out the docs on how to create a VCN in Oracle Cloud Infrastructure.

Bonus Tip: Sign up for an account on papertrailapp.com and set up a log destination. Then update your function with fn update app ords-demo --syslog-url tcp://logs3.papertrailapp.com:53136 so that you can log your function's console output to the event viewer in papertrail!

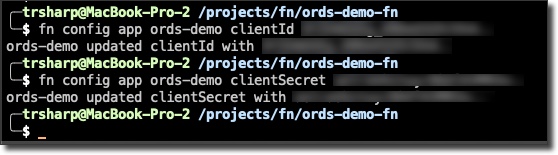

We'll need to set a few config variables for our function to get our client_id and client_secret into our function. We can do this with fn config app [app name] [config key] [config value]:

Note: You should always encrypt any configuration variables that contain sensitive information. Check my guide to using Key Management in OCI to learn how!

Now create the function:

fn init --runtime java ords-demo-fn

Open up the generated project in your favorite IDE and take a look at the HelloFunction.java class. Let's set our base URL and create a private function to grab our auth token. Note that our application config variables are available as environment properties here:

We've set up an HttpClient, a variable containing our ORDS base URL and created a function that we can use to generate an auth token. Now let's add a static inner class to represent our user:

The Oracle Functions Java FDK includes Jackson, so we can annotate our User class with @JsonAlias to map certain elements in the ORDS JSON response to a property in our response object and use @JsonIgnore to prevent certain properties from being included in the response. Make sure you include the dependency in your pom.xml:

Finally, we can implement our handleRequest method and make the call to ORDS to retrieve our user by username, serialize the response as a User object and return it. Here's how the entire class looks once fully implemented:

Testing The Function

Let's write a simple test to make sure that our function works as expected. I've hardcoded my expected JSON response here, yours would be different. You'll need to set a clientId and clientSecretas environment variables in your shell before running the tests so that the proper values get utilized.

Deploying The Function

When we use the Fn CLI to deploy our function to the cloud it will run our unit tests as part of the build process. Since we've manually run our test and ensured that it passed and we're dependent on the config variables being set into our environment for our test to run we're going to drop our own Dockerfile into the root of the project that will skip running the tests as part of the build process (side note: I'm working with our PMs and engineers to make this process easier in the future).

The only change to the standard Dockerfile used with this function is the addition of -DskipTests=true to the RUN command for the final build. Now we can deploy our function to the cloud with fn deploy --app ords-demo.

Invoking The Function

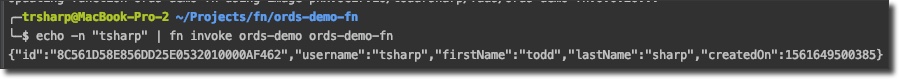

At this point our function is able to be invoked by running fn invoke ords-demo ords-demo-fn, but we'll want to pass our username into the function, so run echo "tsharp" | fn invoke ords-demo ords-demo-fn and we'll receive the user response:

Summary

In this post we looked at how to enable ORDS for a schema in our Autonomous DB instance, secure ORDS with a privilege and create an auth client and credentials that we can use to authenticate our HTTP calls to the REST endpoints. We used those credentials from our serverless function to make an HTTP call to our custom ORDS endpoint and serialized the result as a Java POJO and returned that result from our function call.

Photo by Spring Fed Images on Unsplash

Related Posts

Querying Autonomous Database from an Oracle Function (The Quick, Easy & Completely Secure Way)

I've written many blog posts about connecting to an Autonomous DB instance in the past. Best practices evolve as tools, services, and frameworks become...

Sending Email With OCI Email Delivery From Micronaut

Email delivery is a critical function of most web applications in the world today. I've managed an email server in the past - and trust me - it's not fun...

Brain to the Cloud - Part III - Examining the Relationship Between Brain Activity and Video Game Performance

In my last post, we looked at the technical aspects of my Brain to the Cloud project including much of the code that was used to collect and analyze the...